|

12/12/2022 0 Comments Image deduplicator  With a 2:1 compression ratio, the backup administrator needs to provision between 10 to 75TB for every full backup, plus have more space for incremental and differential backups. PCs contain from 20 to 150TB (terabytes) of data. An average laptop can hold from 50 to a few hundred gigabytes of data on the hard disk. The “sheer volume of data” was given as one of the primary reasons why.įor example, let’s look at a company with 400 employees who use desktops and laptops.

However, 75 percent of small-to medium-sized businesses (SMBs) surveyed by Acronis and IDC (International Data Corporation) admit that their data is not fully protected. Otherwise your company can lose money, reputation, time - your entire business can even shut down. You must protect and back up all this data. Every 10 minutes, humanity creates as much data volume as was created from the dawn of civilization until the year 2000. Metrics are meaningless without context.In 1990, the hard disk of a personal computer was 10 megabytes. Have a strong baseline measure focus your project on comparing different solutions, not on having a single strong accuracy metric. It would be more powerful if it works after training on a different dataset but you could compare. Probably fine if it works, although like i said it might lose a lot of detail close to the output without the autoencoder architecture, because the final classification doesn't really need spatial content information anymore. but since your project requires content preservation, maybe classification is limiting in the lowest layers? i wonder if a FCNN autoencoder that goes to a low resolution (without MLP bottleneck) might be more appropriate. I would use a standard imagenet classification architecture, your choice is probably fine. Not saying you are doing anything wrong by doing it this way, but you should at least develop a strong baseline using classical methods if you want to validate it.Įdit: but i can do you the courtesy of answering some of your questions. Have you tried comparing against a drastically simpler solution, like for example normalizing and scaling the images to the same size, then convolving them to get a correlation metric? Have you done any reading on image comparison / fingerprinting? This is a topic that has been already well explored. Should I use a model specifically trained on my dataset? My final project is based on classifying the dataset.ĭo you have a solution better than the method I am currently using? (Feature Extraction + Cosine Similarity)Īny other suggestions to improve my current pipeline? What layer should I extract features from? Right now, I am extracting features from the Pooling layers at the end of the model.

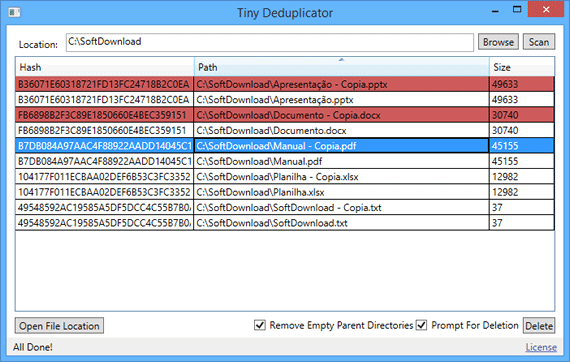

What model architecture should I use for extracting features from? Currently, I am using a pre-trained (on the ImageNet Dataset) PyTorch EfficentNet model to extract the features. I had a few questions regarding this problem: You can imagine these as the photos you take from your mobile phones of the same thing but by slightly moving your hand position. For every cosine similarity value > threshold, I call them duplicates.īy duplicates, I mean images that are very similar but differ slightly in skew, brightness, rotation and crop. The method I am using consists of extracting features for each image from a ConvNet and computing Cosine Similarity values for each pair of images.

I want to detect and remove duplicate images from a dataset.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed